I had the pleasure of installing a 4-Node SolidFire 19210 recently. While the system was being used strictly for Fiber Channel block devices to the hosts, there was a little bit of networking involved. There are diagrams below that detail what I have explained for the most part if you want a TL;DR version.

The system came with 6 Dell 1U nodes. 4 of the nodes were full of disks. The other two had no disks but extra IO cards in the back (FC and 10Gb).

First step was to image the nodes to Return them To Factory Image (RTFI). I used version 10.1 and downloaded the “rtfi” ISO image at the link below.

https://mysupport.netapp.com/NOW/download/software/sf_elementos/10.1/download.shtml

With the ISO you can create a bootable USB key:

- Use a Windows based computer and format a USB key in FAT 32 default block size. (a full format works better than a quick format)

- Download UNetbootin from http://unetbootin.sourceforge.net/

- Install the program on your windows system

- Insert USB key before starting UNetBootin

- Start UNetBootin

- After the program opens select the following options:

- Select your downloaded image […]

- Select Type : USB drive

- Drive: Select your USB key

- Press okay and wait for key creation to complete (Can take 4 to 5 minutes)

- Ensure process completes then close UNetBootin once done

Simply place the USB key in the back of the server and reboot. On boot, it will as a couple of questions, it will start installing Element OS, and then shut itself down. It will boot up into what they call the Terminal User Interface (TUI). It will come up in DHCP mode. If you have DHCP, it will pick up an IP address. You will be able to see which IP it obtained in the TUI. You can use your web browser to connect to that IP. Alternately, using the TUI, you can set a static IP address for the 1GbBond interface. I connected to the management interface on the nodes once the IPs were set to continue my configs although you can continue using the TUI. To connect to the management interface, go to https://IP_Address:442. As for now, this is called the Node UI.

Using the Node UI, set the 10Gb IP information and hostname. The 10Gb network should be a private non-routable network with jumbo frames enabled through and through. After setting the IPs, I rebooted the servers. I then logged back in and set the cluster name on each node and rebooted again. They came back up in a pending state. To create the cluster, I went to one of the nodes IP addresses and it brought up the cluster creation wizard (this is NOT on port 442 instead, port 80). Using the wizard, I created the cluster. You will assign an “mVIP” and and “sVIP”. These are clustered IP addresses for the node IPs. mVIP for the 1GbBonds, and sVIP for the 10GbBond. Management interface is at the mVIP and storage traffic runs over the sVIP.

Once the cluster was created, we downloaded the mNode OVA. This is a VM that sends telemetry data to Active IQ, runs SNMP, handles logging and a couple of other functions. We were using VMWare so we used the image with “VCP” in the filename since it has the plugin.

https://mysupport.netapp.com/NOW/download/software/sf_elementos/10.1/download.shtml

Using this link and the link below, we were able to import the mNode into vCenter quickly. Once it had an IP, we used that IP to connect to port 9443 in a web browser and register the plugin to vCenter with credentials.

https://kb.netapp.com/app/answers/answer_view/a_id/1030112

I then connected to the mVIP and under Clusters–>FC Ports, I retrieved the WWPNs for zoning purposes.

Your SAN should be ready for the most part. You will need to create some logins at the mVIP along with volumes and Volume Access Groups. Once you zone your hosts in using single initiator zoning, you should be able to scan you disk bus on your hosts and see your LUNs!

As mentioned there were some network connections to deal with. My notes are below.

I am not going to cover the 1GbE connections. Those are really straight forward. One to each switch. Make sure the ports are on the right VLAN. The host does NIC teaming. Done.

iDRAC is even easier. Nothing special. Just run a cable from the iDRAC port to your OOB switch and you are done with that too. IP address is set through the BIOS.

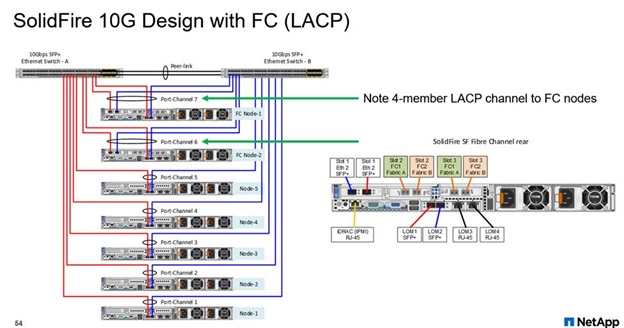

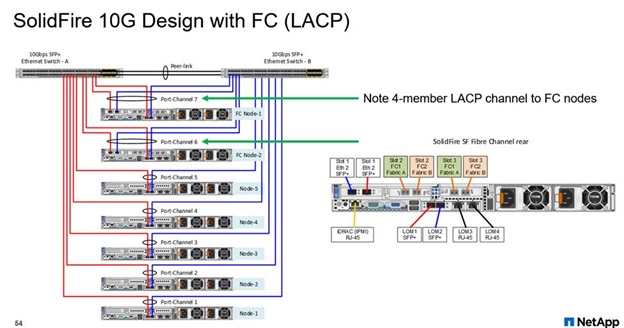

FC Gateways (F-nodes)

These nodes have 2x 10Gb ports onboard and 2x 10Gb ports in Slot 1 along with 2x Dual Port FC cards.

For the FC gateways (F-nodes), there were 4x 10Gb ports on each node. 2 were onboard like the S-nodes and 2 are in a card in Slot 1.

From F-node1, we sent port0 (onboard) and port0 (card in slot 1) to switch0/port1 and port2. From F-node2, we sent port0 (onboard) and port0 (card in slot 1) to switch1/port1 and port2. We then created an LACP bundle with switch0/port1 and port2 and switch1/port1 and port2. One big 4 port LACP bundle.

Then, back to F-node1, we sent port1 (onboard) and port1 (card in slot 1) to switch0/port3 and port4. From F-node2 we sent port1 (onboard) and port1 (card in slot 1) to switch1/port3 and port4. We then created another LACP bundle with switch0/port3 and port4 and switch1/port3 and port4. Another big 4 port LACP bundle.

We set private network IPs on the 10g Bond interfaces on all nodes (S and F alike). Ensure jumbo frames is enabled throughout the network else you may receive errors when trying to create the cluster (xDBOperation Timeouts). Alternately, you can set the MTU for the 10GBond interfaces down to 1500 and test cluster creation to verify that jumbo frames are causing issues but this is not recommended for a production config. It should simply be used to rule out jumbo frames config issues.

As mentioned, each F-node has 2x Dual Port FC cards. From Node 1, Card0/Port0 goes to FC switch0/Port0. Card1/Port0 goes to FC switch0/Port1. Card0/Port1 goes to FC switch1/Port0 and Card1/Port1 goes to FC switch1/Port1.

Repeat this pattern for Node 2 but use ports 2 and 3 on the FC switches.

Storage Nodes (S-Nodes)

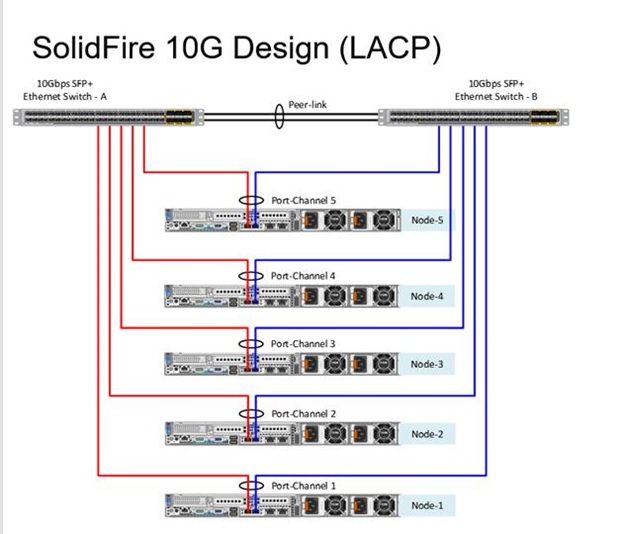

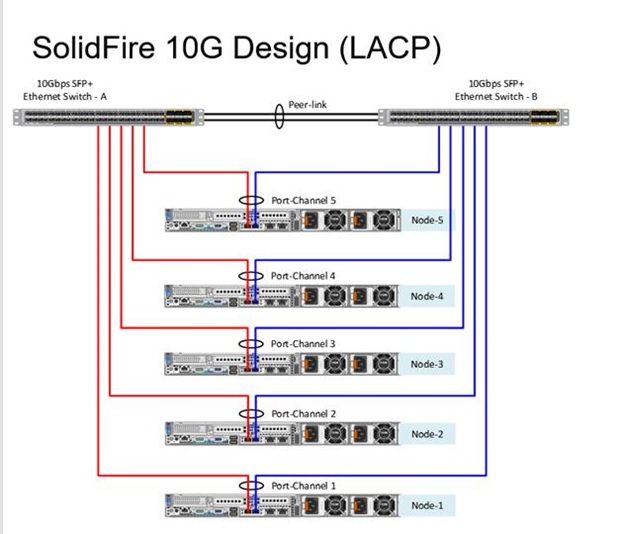

On all S-nodes, the config is pretty simple. Onboard 10Gb port0 goes to switch0 and onboard 10Gb port1 goes to switch1. Create an LACP port across the two ports for each node.

If the environment has two trunked switches, where the switches appear as one, you must use LACP (802.3ad) bonding. The two switches must appear as one switch, either by being different switch blades that share a back-plane, or have software installed to make it appear as a “stacked” switch. The two ports on either switch must be in a LACP trunk to allow the failover from one port to the next to happen successfully. If you want to use LACP bonding, you must ensure that the switch ports between both switches allow for trunking at the specific port level.

There may be other ways to do this. If you have comments or suggestions, please leave them below. If I left something out, let me know.

Some of this was covered nicely in the Setup Guide and the FC Setup Guide but not at length or detail enough. I had more questions and had to leverage good network connections to get answers.

Thanks for reading. Cheers.